I currently cooking a system, largely based on bash, to collect remote sensing data from the web. Since I’m using my personal ADSL connection, I can expect to have many corrupted downloads. I made a little script to check files integrity, and trigger again their download.

First, how to know if a file is corrupted or not? Two technics: either your collect the error code from you download software (ftp here) and log them somewhere to try again or you are able to assess the integrity of a file simply by scanning it. Let’s consider the second case.

The central problem is that you may have various kind of file, so there is not a method per kind of file to check. For example, if we download modis files we have an xml and an hdf file. For a given file, the script must first guess the type of file, then choose an ad-hoc function for checking this file. We assume here that the file type is found by considering the file extension.

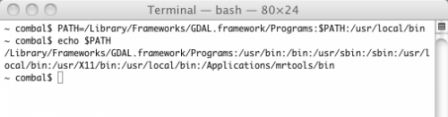

To get the file extension from a full file path, simply remove the file path and keep what you find after the last ‘.’, which is a job for sed:

echo $fileFullPath | sed 's/^.*\///' | sed 's/^.*\.//' | tr '[:upper:]' '[:lower:]'Command

sed 's/^.*\///'removes the file path, by substituting repetitions (*) of any characters (.*) with nothing, starting from the string beginning (^). Then anything up to the last point is removed (think to escape the point \.).

Note that the regular expression of your system may give different result: give it some tries.

Now we need to call the appropriate test routine as a function of the detected file extension: a simple case function will do this job. Finally, let’s wrap-up everything in a single function (selectFunc): call it with a file name, and it returns the test function to call.

function selectFunc(){

# receives a file name and decides which integrity test function corresponds

if [ $# -ne 1 ]; then

echo "selectFunc is missing a single parameter. Exit."

return -1

fi

selector=$(echo $1 | sed 's/^.*\///' | sed 's/^.*\.//' | tr '[:upper:]' '[:lower:]')

case $selector in

xml) selectFunc='doTestXML';;

hdf) selectFunc='doTestImg';;

tif | tiff ) selectFunc='doTestImg';;

esac

# return the selected function

echo $selectFunc

}We can see that there is a pending problem with respect to file without an extension, like ENVI native file format (it does not require an extension, only a companion text file). To improve this situation, you can either force an extension to this kind of files (like .bil or .bsq for ENVI files), or handle the case of missing extension with additional tests. For example, one could image to call gdalinfo in this case.

Now we just have to write some test functions.

xml files are rather easy to test. Your OS should have a function for that. For Linux, consider xml Starlet, which command line is

xml -val $fileFor images, you should be able to test most of them with gdalinfo.

The function return 0 is everything was ok, 1 else. Actually, the tests functions return the return code of xml starlet and gdalinfo. If you use other kind of test, you may need to translate their exit codes.

At the end, we’ve got something like:

function doTestXML(){

if [ $# -ne 1 ]; then

echo "doTestXML is missing a single parameter. Exit."

exit -1

fi

xmlwf $1 >& /dev/null

return $?

}

function doTestImg(){

if [ $# -ne 1 ]; then

echo "doTestXML is missing a single parameter. Exit."

exit -1

fi

gdalinfo $1 >& /dev/null

return $?

}Note we sent to null any output of the function and take care only of the return code ($?).

Now, all to use this code:

get the name of the test function to call:

myTest=$(selectFunc $file)

and call the script:

$myTest $file

A functional copy of the code is found there.